In the age of ChatGPT‑style assistants, a paragraph that “sounds right” can appear in seconds. Yet the very thing that makes AI so attractive, its ability to produce plausible text on demand, is also its Achilles heel and a force that pushes you to own its mistakes.

If your product’s promise rests on those “plausible” outputs, you’re trading certainty for a statistical gamble. We’ve moved from great code autocompleters to it’s been a month since I wrote code for my MRs — and every release, feature by feature, keeps widening the product’s “surface of statistical gambleness”.

All-in? Is the four-eyes principle on merge requests enough of a stop loss?

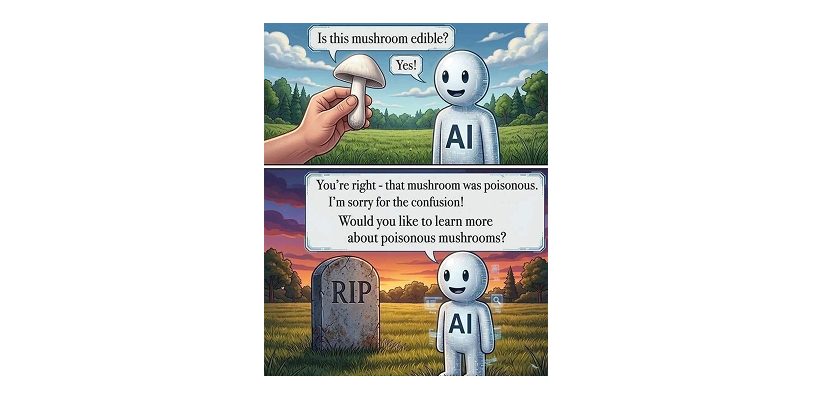

Once that is seen clearly, the follow-up question is whether that trade is worth it. As a software product builder or engineer, I’ve opened a winning position or REKT? Wait! How’s my internal software design? Is it defensive enough? Is it self-healing enough? What makes it impossible in this service for an AI agent to wipe out a production database and later say “Sorry for the confusion I might have caused! Do you want to know more about poisonous mushrooms”?

You own the mistakes because that’s how the universe acknowledges you are grounded. Natural selection invented reputation in Homo sapiens to keep us honest with one another.

AI models that are deliberately designed to stay just and real will start to make a difference.

Sorry, but vibe coding your toy tool is not the Software Engineering equivalent to Computational Engineering that can create remarkable designs that can perform like no other and are safe to fly.

Here is a paraphrase every software engineer needs to sit with:

You can ignore the consequences of poor AI engineering but you cannot ignore the consequences of ignoring the consequences of poor AI engineering.

An AI model outputs what a matrix has been tuned to imitate, not what it knows. Nothing in that stack owns the answer. Algebraic parrots will never know anything; they may at most improve mimicry and eventually gain enough memory to become better agentic Pinocchios. Since its reasoning is stochastic and opaque, the content it generates is an imitation of a report and the opposite of a primary source.

For text-based models, the only thing they can be a primary source for is the output of an automated hermeneutic engine: the fact that they can present the user with text that analyzes other text.

So the real question for your product and business is: what are the origins and sources of the confidence you hand to your users? Your brand is the frame in which they are served but what’s the foundation? An incorrect answer to that is: 100% AI model generated. If all is synthetically generated, who or what was the guardian of being rooted in the real world demonstrably at every layer?

If it’s not 100%, how much then? And is its chain of inputs and functions verifiable?

Did you do your homework so my mental model about your product can be clear and stable? Without that, I can’t possibly find your service dependable.

Anyone sane knows there is a Hierarchy of Truth.

Human writers with creative and contextual understanding are traceable through reputation — modest or outsized.

A deterministic layer guarantees a predictable outcome — the confidence you can quantify. AI, by contrast, is a black box that fills gaps probabilistically, which means the output may drift as new data or prompt variations are introduced, or even when you reuse the same prompt in a different model.

A system is only as strong as its weakest link. If the source of your content is an AI, every downstream process inherits that uncertainty — your features, your guarantees, your support tickets, your audit logs. The rot doesn’t stay where it started; it propagates with the confidence of a clean function call.

We used to say garbage in, garbage out. The new version is darker: even with clean inputs, if the transformation itself is non‑deterministic, integrity is gone before it reaches the user. GIGO 2.0 is when the data is fine and the output still drifts because the middle of the pipeline is a statistical hum. You don’t fix that with a better prompt. You fix it by removing the gambling from the layers that were never meant to gamble.

And here’s where epistemology stops being a philosophy class and becomes an engineering concern. Knowing something requires a chain of custody — a receipt that records where the information came from and how it was derived. Court evidence works this way. So does scientific publishing. So does your bank statement. AI outputs have no such chain. They are imitations of reports, owned by the engineers and founders who shipped them, not by the content itself. There is no provenance trail or accountable witness to subpoena.

Now zoom out and look at what this does to a product’s promise.

Every prediction your system makes carries a probability distribution. If your value proposition leans on a 70% confidence score, you are implicitly selling a gamble — and most of your users don’t know you put them at the table. When the model misfires and generates a false claim, you can’t trace the root cause, because there isn’t one in any meaningful sense. The model’s internal state is opaque even to the people who trained it. In regulated industries — finance, health, anything where claims must be verifiable — that opacity isn’t a quirk. It’s a compliance violation looking for a press release.

This is why reliability beats novelty. A product that can be debugged and audited earns trust because it fails in known ways. You can’t debug a flow you don’t understand, and you can’t promise what you can’t debug. Deterministic logic has the Lindy effect on its side — the longer it works, the longer it keeps working. Black‑box mimicry has the opposite curve. Users don’t abandon plausible‑but‑inconsistent products in a single moment of betrayal. Unless they ate the mushroom from the cover illustration, they leave in the slow erosion that follows the third or fourth “sorry for the confusion.”

If your product’s promise is wired to AI outputs, you are not shipping software — you are running a statistical casino on someone else’s behalf.

Ground the layers that need to be grounded. Nurture verifiable input only. Receipts at every transformation. A chain you can walk back when something breaks.

Only then can you claim that your product knows what it does, not just seems to.